testsetset

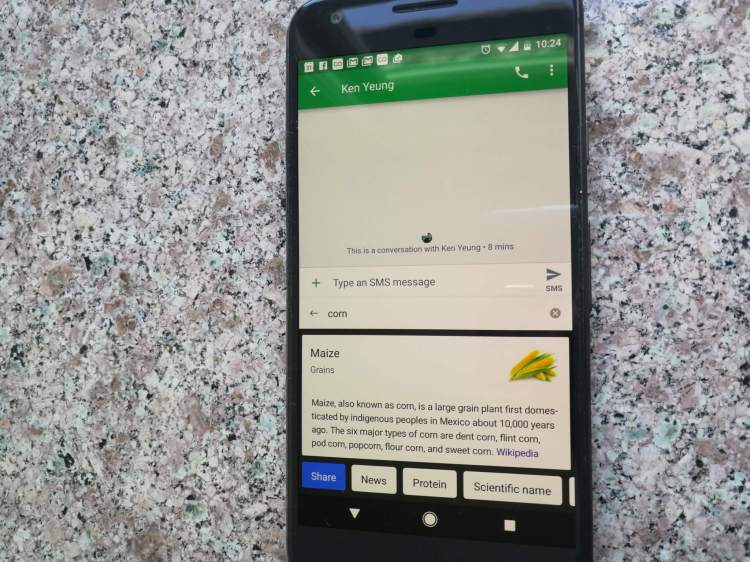

Google today talked about new research it has been doing to power search query suggestions in its Gboard virtual keyboard app for Android. This will involve training artificial neural networks on data that’s stored locally on mobile devices, instead of sending it all to Google’s fleet of servers.

In the past few days, Googlers have published academic papers on the two components involved: a concept called “federated learning” and the underlying privacy system, called “secure aggregation.”

“When Gboard shows a suggested query, your phone locally stores information about the current context and whether you clicked the suggestion. Federated Learning processes that history on-device to suggest improvements to the next iteration of Gboard’s query suggestion model,” Google research scientists Brendan McMahan and Daniel Ramage wrote in a blog post.

McMahan and Ramage foresee using these same technologies to improve photo rankings “based on what kinds of photos people look at, share, or delete,” as well as the Gboard’s language models.

June 5th: The AI Audit in NYC

Join us next week in NYC to engage with top executive leaders, delving into strategies for auditing AI models to ensure fairness, optimal performance, and ethical compliance across diverse organizations. Secure your attendance for this exclusive invite-only event.

This research arrives nearly a year after Apple said that it was deploying a concept called “differential privacy” to turn out smart QuickType and emoji suggestions in iOS 10.

Google’s system starts with the current model for making query suggestions, then learns over time from locally stored data, and then summarizes the changes with what Google calls an “update.” The update is what is sent back to Google in an encrypted fashion, and Google averages it with all of its other updates. The actual data stays local, and Google can only decrypt its average update if there are hundreds or thousands of users contributing to it.

The Google paper on secure aggregation for federated learning acknowledges differential privacy, a co-inventor of which, Cynthia Dwork, works at Microsoft Research. But Google has in fact explored differential privacy in the past, including with its randomized aggregatable privacy-preserving ordinal response (RAPPOR) work that’s been tested in Chrome. Now Google is looking at going deeper into the area and also implementing its secure aggregation model — which depends heavily on small encrypted summaries of data instead of actual data, whereas differential privacy entails incorporating random noise into calculations with data — with one of its more prominent apps. Gboard for Android currently has 500 million to 1 billion app installs, according to the Google Play Store.

Update on April 7: Added detail on the secure aggregation and differential privacy.