With so much news about Fortnite lately, it’s easier to forget that Epic Games makes the Unreal Engine. But today, the game engine behind some of the most realistic and is taking center stage as Epic launches Unreal Engine 4.20.

The update for the entertainment creation tool will help developers create more realistic characters, immersive environments, and use them across games, film, TV, virtual reality, mixed reality, augmented reality, and enterprise applications. The new engine combines real-time rendering advancements with improved creativity and productivity tools.

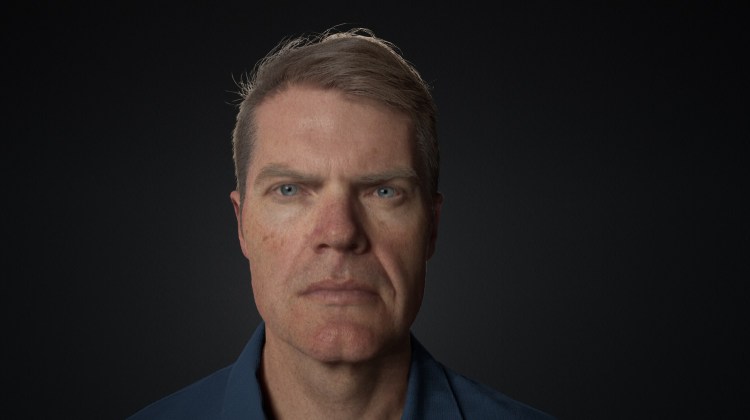

It makes it easier to ship blockbuster games across all platforms, as Epic had to build hundreds of optimizations on iOS, Android, and the Nintendo Switch in particular to build Fortnite. Now those advanced are being rolled into Unreal Engine 4.20 for all developers. I spoke with Kim Libreri, chief technology officer at Epic Games, about the update and how the development of Fortnite helped the company figure out what it needed to provide in the engine.

Libreri said that in non-game enterprise applications, new features will drive photorealistic real-time visualization across automotive design, architecture, manufacturing, and more. The features include things like a new level of detail system, which determines the visual details a player will see on platforms such as mobile devices or high-end PCs.

Well over 100 mobile optimizations developed first for Fortnite will come to all 4.20 users, making it easier to ship the same game across all platforms. There are also tools for “digital humans,” which get the lighting and reflections just right so that it’s hard to tell what’s a digital human versus a real one.

All users now have access to the same tools used on Epic’s “Siren” (in the video above) and “Digital Andy Serkis” demos shown at this year’s Game Developers Conference. The engine also has support for mixed reality, virtual reality, and augmented reality. It can be used to create content for things like the Magic Leap One and Google ARCore 1.2.

Libreri filled me in on these details, but we also talked about the competition with Unity and what it means that Epic can use what it learns from creating its own games to try to outdo its rival.

Here’s an edited transcript of our interview.

Above: KIm Libreri is CTO of Epic Games.

GamesBeat: Tell me what you’re up to.

Kim Libreri: We’re talking about 4.20, Unreal Engine 4.20. The majority of the themes for this release are, we’re following up on what we said we would do at GDC. A lot of the things we showed and demonstrated and talked about at GDC this year are in this release. A large part of that is the mobile improvement, thanks to shipping Fortnite on iOS and soon Android. There’s a ton of work we’ve done to optimize the engine to allow you to make one game and scale across all those different platforms. All of our customers will find excellent upgrades in terms of performance on mobile devices.

Part of that was, we have the proxy LOD system we put in the engine. That allows for automatic LODs to be generated in a smart way. It’s similar to what Simplygon does, but it’s our own little spin on how to do that. That’s shipping. The other cool thing is, Niagara is shipping now in a usable state. We’re getting lots of interesting comments about how customers are finding it and how awesome it’s going to help their games look from a visual effects point of view. Niagara is the new particle system. We used to have a system called Cascade in Unreal Engine, and Niagara is what will ultimately replace Cascade.

You saw a couple of digital human demos we did at GDC. We’re shipping not just the code and the functionality, but also some demo content, thanks to our collaboration with Mike Seymour. He was kind enough to let us give out his digital likeness for people to better to learn how to set up a photorealistic human. From a code perspective, we have better skin shaders that now allow double specular hits for skin. Also, light can transmit through the backs of ears and the sides of the nose. You get better subsurface scattering realism. We also have this screen space indirect illumination for skin, which allows light to bounce off cheekbones and into eye sockets. It matches what happens in reality.

Above: A digital human.

We showed a bunch of virtual production capabilities in the engine as well, cinematography inside a realtime world. The tool we showed, which was used to demonstrate a bunch of our GDC demos, is shipping with 4.20 as well as example content. You can drive an iPad with a virtual camera.

We’ve added new cinematic depth of field. It was built for the Reflections Star Wars demo. At this point we have the best depth of field in the industry. Not only is it accurate to what real cameras can do, it also deals gracefully with out-of-focus objects that are over a shot or in the background, which traditionally was a difficult thing in realtime engines. It’s also faster than the old circle depth of field we had before. It’s going to make games and cinematic content look way more realistic.

On the lighting side, the area lights that we showed at GDC are shipping. That allows you to light characters and objects in a better approximation of how you would do it in a live environment with real, physical lights. You get accurate-looking reflections and accurate-looking diffuse illumination. You have more matches when you’re lighting with a soft box or a diffusion in front of a light, the way you would light a fashion shoot or a movie or whatever. You can replicate all that stuff in the engine now.

On the enterprise side, we now support video input and output in the engine. If you’re a professional broadcaster and you’re doing a green-screen television presentation, similar to what you see in weather broadcasting and sports events, now you have the ability to pump live video from a professional-grade digital video camera straight into the engine, pull a chroma key, and then put that over the top of a mixed reality scene. Things like the Weather Channel are doing some cool stuff now. We see loads of broadcasters using the Unreal Engine for live broadcasting, and we just made that easier with support for AJA video I/O cards.

Again on the enterprise side, we have this thing called nDisplay, which allows you to use multiple PCs to render a scene across multiple display outputs. Say you’ve got one of the massive tiled LCD panels and you have a really super high-resolution display you need to feed to it. You can now have multiple PCs running Unreal Engine, all synchronized together, to drive these tiled video walls.

Shotgun is a very popular production management tool that a lot of studios use in film, television, and games to drive their artist management in terms of what assets they have to make and when they’re due and what the dependencies are. We’ve integrated that into Unreal Engine, so it’s very easy to track assets in and out of Unreal as your production is happening over time.

That’s the main story in terms of what we’re doing. There are lots of minute details that will be in the release does, but that’s the big stuff.

Above: An environment made in Unreal.

GamesBeat: When was the last big update?

Libreri: That was prior to GDC, 4.19.

GamesBeat: What do you think is the most important thing you’re delivering for developers here?

Libreri: Right now, this concept that you can make one game it ships on all platforms, from high-end PCs and consoles down to Switch and mobile phones, that’s a very powerful message for the future of gaming. You don’t have to be as restrictive as you normally would be. Quite often a lot of mobile games aren’t even ports. They’re new versions of a particular game to deal with the differences in hardware.

We’re in a great place now with mobile hardware being pretty powerful, and the fact that Unreal has this cool rendering abstraction capability that allows us to ship the same game content, game logic, on multiple platforms. Everyone can take advantage of what we’ve done on Fortnite. We’re seeing it. We showed ARK running on Switch at GDC. It’s exciting times.

GamesBeat: Do you think Fortnite has proven out the engine’s capability, then, as far as cross-platform goes?

Libreri: Yes, especially considering it’s a live game. We put out updates every week. When we do a new season pass, there’s a lot of stuff that goes up. Being able to do that once and push it to all platforms is a game-changer. We can be live. As you saw from the event in the game last weekend, when we launched the rocket worldwide at the same time—if we had to maintain multiple versions of the game, it just wouldn’t be possible to be as agile as we are. We just can’t wait to see what our customers in the gaming space will be able to do with that capability.