Watch all the Transform 2020 sessions on-demand here.

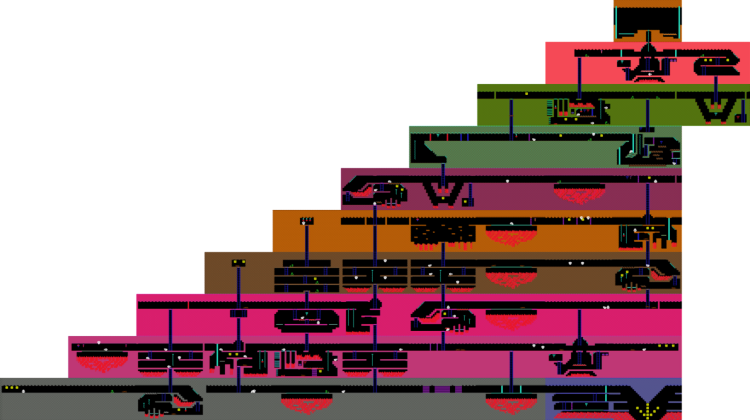

In 2015, Google subsidiary DeepMind published a landmark paper describing a system that could, given enough time and computational horsepower, learn to play a collection of Atari games like Breakout, Enduro, and Pong with superhuman proficiency. It failed to finish the more sophisticated Montezuma’s Revenge — a platformer that tasks players with avoiding laser gates, disappearing floors, fire pits, and other obstacles in pursuit of treasure — but AI systems have come a long way since then.

Case in point: A newly developed framework by researchers at RMIT University in Melbourne, which not only played Montezuma’s Revenge quite skillfully but which managed to learn from its mistakes in-game and identify subgoals (like climbing ladders and jumping over pits) 10 times faster than DeepMind’s original algorithm. The work (“Deriving Subgoals Autonomously to Accelerate Learning in Sparse Reward Domains”) is scheduled to be presented at the ongoing Association for the Advancement of Artificial Intelligence conference in Hawaii on Friday.

“Truly intelligent AI needs to be able to learn to complete tasks autonomously in ambiguous environments,” Associate Professor Fabio Zambetta, who coauthored the paper with RMIT’s Professor John Thangarajah and doctoral student Michael Dann, said in a statement. “We’ve shown that the right kind of algorithms can improve results using a smarter approach rather than purely brute forcing a problem end-to-end on very powerful computers.”

Montezuma’s Revenge has a reputation for being difficult. Researchers peg the blame on the game’s “sparse rewards” — completing a stage requires learning complex tasks with infrequent feedback. As a result, even the best-trained AI agents tend to maximize rewards in the short term rather than work toward a big-picture goal — for example, hitting an enemy repeatedly instead of climbing a rope close to the exit.

June 5th: The AI Audit in NYC

Join us next week in NYC to engage with top executive leaders, delving into strategies for auditing AI models to ensure fairness, optimal performance, and ethical compliance across diverse organizations. Secure your attendance for this exclusive invite-only event.

The trick, in this case, turned out to be reinforcement learning, an artificial intelligence (AI) technique that uses a system of rewards to drive agents toward certain goals. Rather than have humans identify subgoals for the AI or have the AI decide what to do next randomly, the researchers devised a method that rewarded the system for autonomously trying less obvious actions and paths.

It’s much like the approach described in a paper by the nonprofit, San Francisco-based AI research company OpenAI last year, which similarly used a reward mechanism — Random Network Distillation (RND) — that incentivized agents to explore areas of the game map they normally wouldn’t have.

“Creating an algorithm that can complete video games may sound trivial, but the fact we’ve designed one that can cope with ambiguity while choosing from an arbitrary number of possible actions is a critical advance,” Zambetta said. “It means that, with time, this technology will be valuable to achieve goals in the real world, whether in self-driving cars or as useful robotic assistants with natural language recognition.”

Of course, the researchers’ system isn’t the only one that’s demonstrated an aptitude for Montezuma’s Revenge. In two papers last summer, DeepMind described machine learning models that could learn to master Montezuma’s Revenge from YouTube videos and account for reward signals of “varying densities and scales.” Meanwhile, Uber in November 2018 unveiled Go-Explore, a family of so-called “quality diversity” AI models capable of reliably solving the game up to level 159.