Watch all the Transform 2020 sessions on-demand here.

OpenAI, a nonprofit, San Francisco-based AI research company backed by Elon Musk, Reid Hoffman, and Peter Thiel, among other titans of industry, made headlines in June when it announced that the latest version of its Dota 2-playing AI — dubbed OpenAI Five — managed to beat amateur players. Today, it unveiled another first: a robotics system that can manipulate objects with humanlike dexterity.

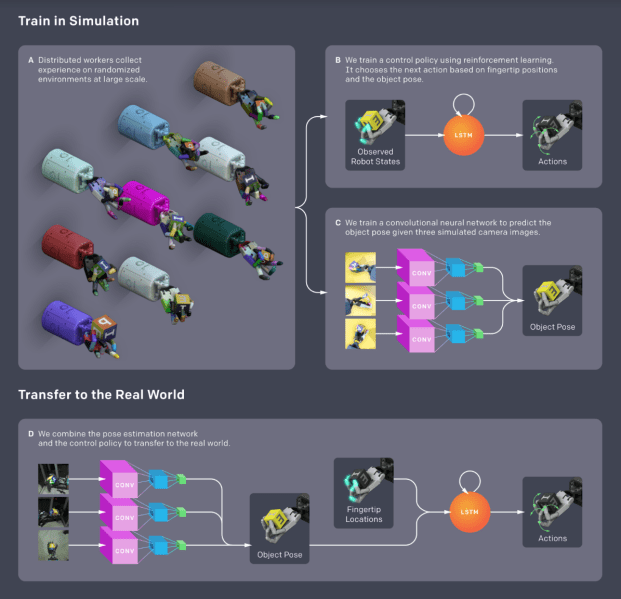

In a forthcoming paper (“Dexterous In-Hand Manipulation”), OpenAI researchers describe a system that uses a reinforcement model, where the AI learns through trial and error, to direct robot hands in grasping and manipulating objects with state-of-the-art precision. All the more impressive, it was trained entirely digitally, in a computer simulation, and wasn’t provided any human demonstrations by which to learn.

“While dexterous manipulation of objects is a fundamental everyday task for humans, it is still challenging for autonomous robots,” the team writes. “Modern-day robots are typically designed for specific tasks in constrained settings and are largely unable to utilize complex end-effectors … In this work, we demonstrate methods to train control policies that perform in-hand manipulation and deploy them on a physical robot.”

So how’d they do it? The researchers used the MuJoCo physics engine to simulate a physical environment in which a real robot might operate, and Unity to render images for training a computer vision model to recognize poses. But this approach had its limitations, the team writes — the simulation was merely a “rough approximation” of the physical setup, which made it “unlikely” to produce systems that would translate well to the real world.

June 5th: The AI Audit in NYC

Join us next week in NYC to engage with top executive leaders, delving into strategies for auditing AI models to ensure fairness, optimal performance, and ethical compliance across diverse organizations. Secure your attendance for this exclusive invite-only event.

Above: Novel object manipulations discovered by OpenAI’s robotics system.

Their solution was to randomize aspects of the environment, like its physics (friction, gravity, joint limits, object dimensions, and more) and visual appearance (lighting conditions, hand and object poses, materials, and textures). This both reduced the likelihood of overfitting — a phenomenon that occurs when a neural network learns noise in training data, negatively affecting its performance — and increased the chances of producing an algorithm that would successfully choose actions based on real-world fingertip positions and object poses.

Next, the researchers trained the model — a recurrent neural network — with 384 machines, each with 16 CPU cores, allowing them to generate roughly two years of simulated experience per hour. After optimizing it on an eight-GPU PC, they moved onto the next step: training a convolutional neural network that would predict the position and orientation of objects in the robot’s “hand” from three simulated camera images.

Above: A diagram flow illustrating the model-training process.

Once the models were trained, it was onto validation tests. The researchers used a Shadow Dexterous Hand, a robotic hand with five fingers with a total of 24 degrees of freedom, mounted on an aluminum frame to manipulate objects. Two sets of cameras, meanwhile — motion capture cameras as well as RGB cameras — served as the system’s eyes, allowing it to track the objects’ rotation and orientation. (Although the Shadow Dexterous Hand has touch sensors, the team chose only to use its joint-sensing capabilities for fine-grain control over the fingers’ positions.)

In the first of two tests, the algorithms were tasked with reorienting a block labeled with letters of the alphabet. The team chose a random goal, and each time the AI achieved it, they selected a new one until the robot (1) dropped the block, (2) spent more than a minute manipulating the block, or (3) reached 50 successful rotations. In the second test, the block was swapped with an octagonal prism.

The result? The models not only exhibited “unprecedented” performance, but naturally discovered types of grasps observed in humans, such as tripod (a grip that uses the thumb, index finger, and middle finger), prismatic (a grip in which the thumb and finger oppose each other), and tip pinch grip. They also learned how to pivot and slide the robot hand’s fingers, and how to use gravitational, translational, and torsional forces to slot the object into the desired position.

“[O]ur system can [not only] rediscover grasps found in humans, but adapt them to better fit the limitations and abilities of its own body,” they wrote.

That’s not to suggest it’s a perfect system. It isn’t explicitly trained to handle multiple objects — it struggled to rotate a spherical third object. And in the second test, there was a measurable performance gap between the simulation and the real robot.

But in the end, the results demonstrate the potential of contemporary deep learning algorithms, the researchers conclude: “[These] algorithms can be applied to solving complex real-world robotics problems which are beyond the reach of existing non-learning-based approaches.”